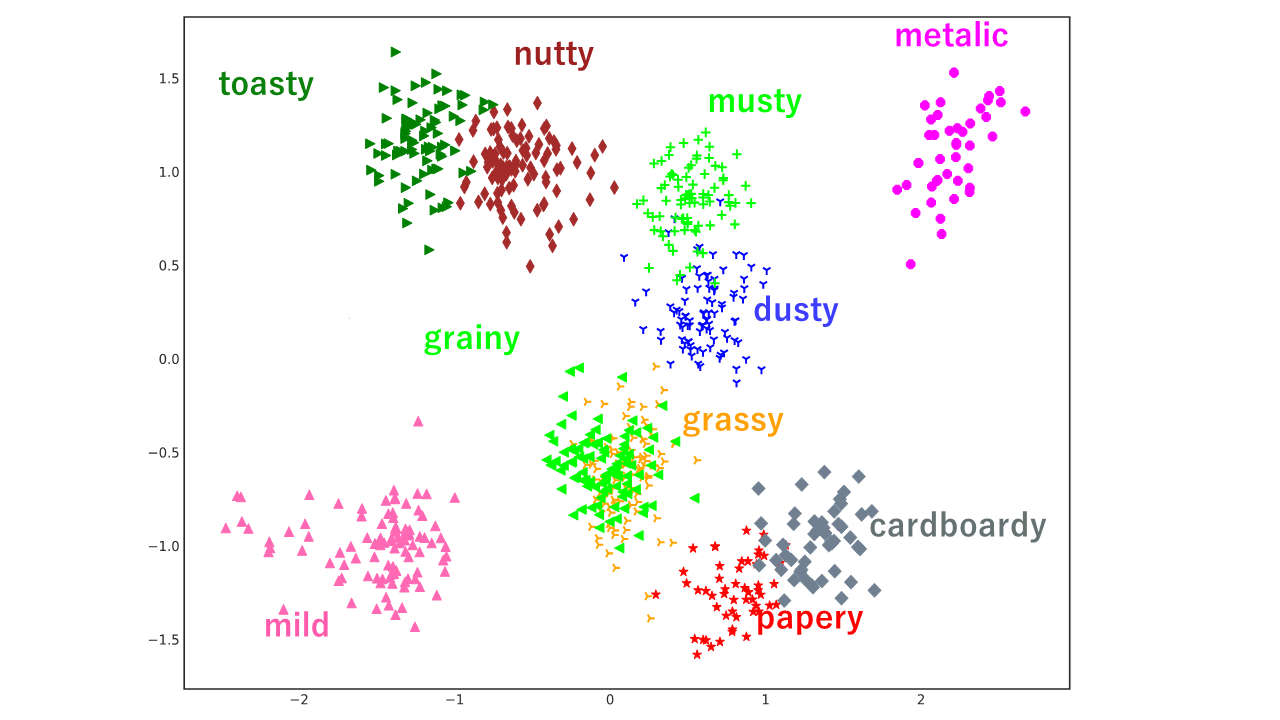

When people verbalize what they have felt with different sensory functions, they often represent different meanings such as with temperature range using the same word cold or the same meaning by using different words (e.g., hazy and cloudy). These interpersonal variations in word meanings have the effects of not only preventing people from communicating efficiently with each other but also causing troubles in natural language processing (NLP). Accordingly, to highlight interpersonal semantic variations in word meanings, a method for inducing personalized word embeddings is proposed. This method learns word embeddings from an NLP task, distinguishing each word used by different individuals. Review-target identification was adopted as a task to prevent irrelevant biases from contaminating word embeddings. The scalability and stability of inducing personalized word embeddings were improved using a residual network and independent fine-tuning for each individual through multi-task learning along with target-attribute predictions. The results of the experiments using two large scale review datasets confirmed that the proposed method was effective for estimating the target items, and the resulting word embeddings were also effective in solving sentiment analysis. By using the acquired personalized word embeddings, it was possible to reveal tendencies in semantic variations of the word meanings.

Publication

- Daisuke Oba, Shoetsu Sato, Satoshi Akasaki, Naoki Yoshinaga, Masashi Toyoda, Personal Semantic Variations in Word Meanings: Induction, Application, and Analysis, Journal of Natural Language Processing, 2020, Volume 27, Issue 2, Pages 467-490, Released on J-STAGE September 15, 2020, Online ISSN 2185-8314, Print ISSN